One of the most common mistakes in enterprise AI adoption is treating it as a single, uniform event. “We’re deploying AI — now everyone is more productive.”

The technology lands, the announcement goes out, and leadership moves on. But the people sitting in different roles across the organization are not experiencing the same thing. Not even close.

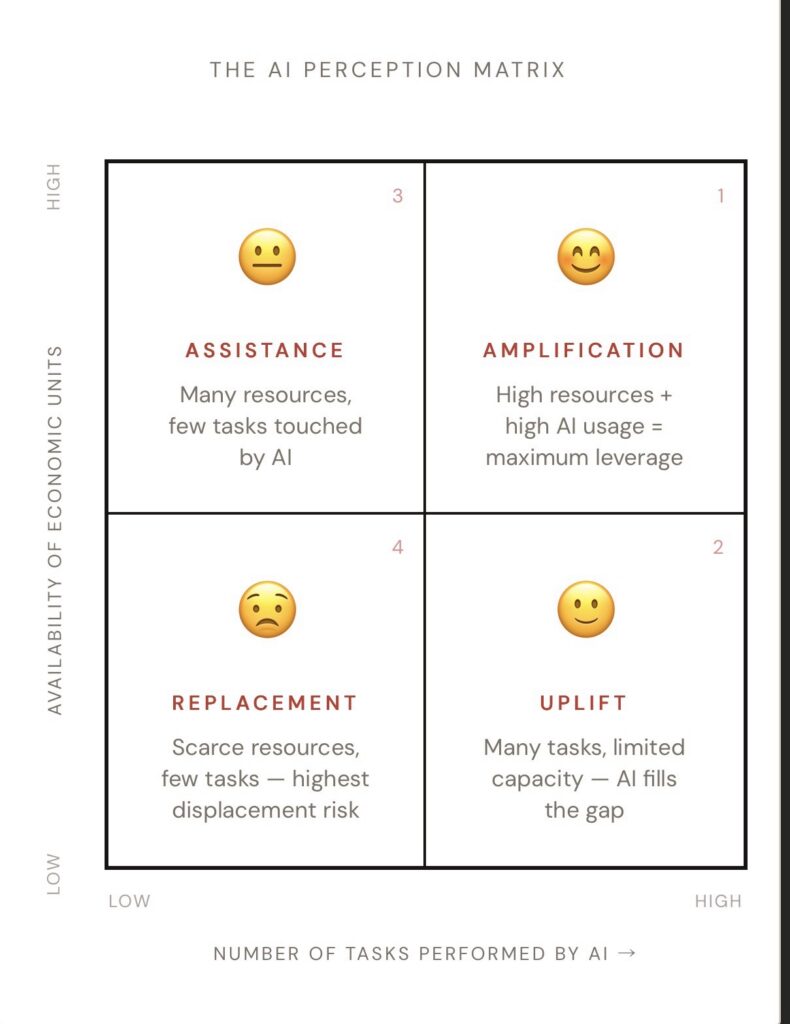

The framework below is an attempt to make that visible — and to give business leaders a more honest vocabulary for change management conversations.

The Two Variables That Actually Matter

Before getting to the quadrants, it’s worth being precise about what we’re measuring.

Variable 1: Task Coverage

The first variable is the number of tasks a person performs that AI can meaningfully touch. This isn’t a binary. A research analyst producing morning briefings, sector summaries, and earnings commentary might find that AI can assist with 60% of their daily output. A specialist doing one highly technical, narrow function might find that number is closer to 10%. The more surface area AI has across someone’s workflow, the higher they sit on this axis.

Variable 2: Availability of Economic Units

The second variable is the availability of economic units — the pool of “things to be processed” that a role has access to. This is the less obvious axis, and it’s the one that changes everything. In an asset management context, a fund reporting analyst produces a fixed number of regulatory reports each quarter — the universe of reportable funds doesn’t grow because they work faster. In contrast, a portfolio manager could theoretically cover more positions, more markets, more client mandates, if only they had more hours in the day. One universe is bounded. The other isn’t.

These two variables are independent of each other, and their combination produces four meaningfully different experiences of the same technology.

The FThe Four Quadrants of AI Impact

Quadrant 1 — Amplification 😊

High task coverage × Unbounded economic units

This is the best-case scenario, and it’s the one that tends to dominate the public narrative around AI. Think of a portfolio manager who could theoretically cover not ten positions, but a hundred. The constraint has always been bandwidth, not opportunity. When AI removes the bandwidth ceiling, the result is unambiguous: they can do more of the same valuable work, at greater scale, with greater depth. AI is experienced as a pure amplifier. There is no perceived threat, no ambiguity — adoption tends to happen organically because the upside is immediate and obvious

Quadrant 2 — Uplift 🙂

High task coverage × Finite economic units

Here there are many tasks AI can assist with, but the pool of work is constrained. Even as AI automates some of the lower-value activities, there is enough remaining work to keep the person meaningfully employed. Crucially, the tasks AI tends to absorb first are usually the ones people least enjoy — repetitive, administrative, low-stakes. The result is genuine uplift: friction removed, purpose intact. These employees often become enthusiastic adopters once they understand what changes and what doesn’t.

Quadrant 3 — Assistance 😐

Low task coverage × Finite economic units

This is where the tone shifts, and where many organizations underestimate the complexity of the situation. The role is narrower — fewer tasks for AI to touch — and the economic unit pool is finite. Consider a fund reporting analyst whose entire role centers on producing a quarterly set of regulatory documents for a fixed book of funds. AI accelerates the process, but the number of reports doesn’t increase. The work doesn’t expand to absorb the freed capacity. It simply shrinks. AI is perceived as assistance — still useful, not overtly threatening — but the emotional texture is already different. There is a creeping sense that the value of the role is being quietly compressed. The music changes, even before anyone says anything.

Quadrant 4 — Replacement 😟

Low task coverage × Finite economic units, narrow role

The most difficult quadrant. A role focused on one or two tasks, with a finite and possibly shrinking pool of work. When AI can automate or significantly accelerate those tasks, there is nothing to absorb the freed time. No “and then I’ll do more of something else.” The fear here is rational. It doesn’t require catastrophizing. It’s a straightforward reading of a real situation, and it deserves to be treated as such — not dismissed, not managed with platitudes, but addressed with honesty about what the transition actually looks like and what organizational investment it requires.

Why This Matters Before You Walk Into the Room

When presenting an AI initiative to business stakeholders, the temptation is to flatten the narrative. “AI will help everyone.” Over long time horizons and with the right organizational changes, this may be true. But in the near term, at the individual level, it is not true for people in Quadrant 3 and Quadrant 4 — and they know it.

If you walk in with a one-size-fits-all message, you will generate resistance from the employees who are reading their situation most accurately. The framework gives you the language to get ahead of that. It lets you segment the workforce honestly, anticipate the different emotional responses, and design change management interventions that are actually calibrated to each group’s reality — rather than optimized for the group with the easiest story to tell.

Three Questions to Run the Diagnostic

Before any AI deployment conversation, ask these three questions for each role or team:

- How many of their daily tasks can AI meaningfully assist with?

You don’t need a technical audit. A structured conversation with line managers about how people actually spend their time is usually enough. - Is their economic universe finite or unbounded?

Are they processing a fixed set of outputs each period, defined by regulation or a static book of business? Or could they theoretically scale their output indefinitely if time weren’t the constraint? - What happens to the freed capacity?

If AI handles 30% of their current work, what fills that space? More demand waiting to be served? Higher-value work they never had time for? Or nothing — because the workload is fixed and there’s no obvious alternative?

The answers place each group in the matrix. And the matrix tells you not just what message to deliver, but what you actually owe each group in terms of honesty, investment, and organizational support.

Conclusion: Change Management for AI Is Not One Conversation

Change management for AI is not one conversation. It is four conversations, happening simultaneously, with different people who have legitimate but very different stakes. The organizations that acknowledge this complexity will navigate the transition more effectively — and more ethically — than those that don’t.

There is a lot of work to do. This is where that work starts.